It is all fine to own the prettiest pair of ballet shoes, but the entire exercise is futile when one is unable to walk in them!

The present-day obsession among companies when it comes to selection and fitment of “talent” truly reflects the increased focus on effective ROI on Human Capital. Indeed, organisations have come a long way from the days when they ran simple tests to ascertain the aptitude to using sophisticated instruments to assess the ‘right fit” to a role, as well as to assess the potential for growth. In a few instances, assessments have also been used to plan Succession pipelines and identify Board level incumbents.

However, a lot remains to be said when it comes to a suitable assessment framework for the selection of key talent. For starters, it minimises the risk of bringing in someone who is a misfit for the role at hand. And it also reduces the loss that arises out of attrition of the existing teams who might find that the new incumbent is simply unsuitable for the role. Besides, such an assessment also provides organisations with a quantifiable, objective way of being able to segregate talent pools, totally excluding unsuitable talent, and focusing energy and resources on the top twenty percent talent pool.

Despite the many obvious advantages in using some kind of cognitive or psychometric assessment tool, there has been a lot of obvious disgruntlement in various circles about the indiscriminate use of tools. And not every gripe is unfounded. A large part of the disillusionment comes from the fact that some tests are proving to be a rather poor indicator of future success in job roles, and some part comes from the confusion around interpretation and usage of tests results.

The criteria for investment in tools

Any decision taken by an organisation that chooses to invest in a selection tool mechanism needs to be based on certain criteria. The most critical criterion for the selection of these tools is the deployment and contextualisation of the tools to the milieu. For instance, a small to midcap company in India with a regional base would not find it suitable to use tools like the Occupational Personality Questionnaires (OPQ), that rely heavily on English as the medium of response. Local participants may sometimes find the questions to be difficult to interpret, and at other times, find the context impossible to relate to. Contextual validity also relates to the type of test deployed vis – a – vis the nature of the business. For instance, a business that is service driven and largely dependent on spoken communication would do best to avoid written tests, and focus more on fluency than grammatical accuracy of language.

The second critical aspect that one needs to consider is the reliability and validity of the test being used. Reliability indicates that the repetitive use of the same test over a period of time would yield results that are more or less consistent on every occasion. Validity of a test means that it measures what it is supposed to measure. E.g. if it is a trait-based inventory, does it tell you something about the traits of the incumbent? Validity comes in different forms, but the most important measure is the predictive validity of the tool. In the parlance of test construction, predictive validity is the extent to which a score gets predicted on certain parameters. For example, the validity of a cognitive test for job performance is the extent to which the score on the test will correlate with the incumbent’s performance on the job, which could be measured through performance ratings or supervisor feedback on a rating scale.

These days, the need for quick adaptation and accessibility means that the tests must be enabled to run through technology as a channel of deployment. Technology presents advantages as it implies that the test can be translated into any language, contextualised to different users, and also rendered in cultural adaptations. However, in the race to personalisation, the test design may face challenges related to standardisation, normalisation of the score, and the overall effectiveness of the test in itself. At times, Face Validity of the test may be called into question.

An inherent defect

Tests also suffer from an inherent defect in the sense that they are likely to measure inherent aptitude or probably personality preferences in personality inventories. However, these may not exactly play out in the real world as other environmental factors come into play. On the job, there are other factors such as social interactions, complexity of the environment, extent of ambiguity, changing leadership, and external pressures which could impose constraints on the ability of an incumbent to be able to maximise his potential. While simulationbased immersive tests hold promise in terms of being able to predict the impact of these variables on test performance, they are not always capable of real-world predictive accuracy.

Personality inventories suffer from some other handicaps as well. As an example, the MBTI clearly specifies that it is not to be used as a basis for selection. However, organisations continue to use it as a means of elimination. Other tools such as OPQ and Kolbe’s are designed with the intent of working with individual strengths and abilities. However, if not suitably interpreted or in the right spirit, they could be misused and reject otherwise capable candidates. Additionally, many large companies run quasi-assessment methods like exercises using a combination of tools and come up with detailed reports. These reports can be problematic at times, and organisations also tend to sometimes go with some standardised tools and measure the wrong competencies entirely.

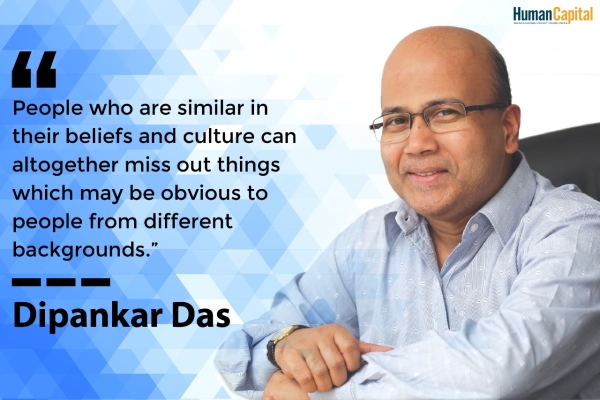

The recent wave of startups has completely disregarded this stringent approach to fitment and focused on considering capabilities. Within the larger framework of diversity and inclusiveness, this makes greater sense as we need to learn to work with and build on differences, rather than harmonising similarities. Besides, many cuttingedge organisations are completely disregarding qualifications and aptitude tests and focusing on outcomes.

Creative organisations do not care much for cognitive or personality tests, and in my experience, tests such as DISC or OPQ are impractical when it comes to predicting their success on the job.

It is always uncomfortable when the shoes do not fit right. With the multi-generational workforce that is available today, there is a divergence of thought, skills, and abilities, and many of these leading selection tools were created in the era bygone and been tested on a fairly homogenous population. Sampling and validating costs time and money, and one is uncertain how many of these have been recalibrated in the light of changing times, Talent acquisition teams need to pay due consideration to the tools they select, evaluate them for purpose and context, and ensure that they measure what needs to be measured. It is all fine to own the prettiest pair of ballet shoes, but the entire exercise is futile when one is unable to walk in them!

Is your organisation post-COVID-ready?

Trending

-

SBI General Insurance Launches Digital Health Campaign

-

CredR Rolls Out 'Life Happens' Leave For Its Employees

-

Meesho Announces 30-Week Gender-Neutral Parental Leave Policy

-

Microsoft Unveils Tech Resilience Curriculum To Foster An Inclusive Future

-

60% Indian Professionals Looking For Job Change Due To COVID: Survey

-

SpringPeople And Siemens Collaborate For Digital Transformation Push

-

86% Professionals Believe Hybrid Work Is Essential For Work Life Balance: Report

-

Almost 1 In Every 3 People's Personal Life Affected Due To Work Stress

-

Meesho Rolls Out Reset And Recharge Policy For Employees

-

80% Of Talent Leaders & Academics Say Pandemic Changed Skill Needs For Youth: Report

-

Hero Electric Rolls Out 'Hero Care' Program For Employees

-

Human Capital In Collaboration With ASSOCHAM Hosts Virtual Conference

-

IKEA India, Tata STRIVE Collaborate To Create Employability And Entrepreneurship Opportunities

-

SAP India, Microsoft Launch Tech Skilling Program for Young Women

-

DXC Technology, NASSCOM Collaborate For Employability Skills Program

-

Lenskart To Hire Over 2000 Employees Across India By 2022

-

Mindtree Launches Learn-and-Earn Program

-

Tata AIA Extends 'Raksha Ka Teeka' To Its Employees

-

Swadesh Behera Is The New CPO Of Titan

-

NetConnect Global Plans To Recruit 5000 Tech Professionals In India

-

Hubhopper Plans To Hire 60% Of Indian Podcasters By 2022

-

Corporate India Needs More Women In Leadership Roles: Report

-

Aon to Invest $30 Million and Create 10,000 Apprenticeships by 2030

-

Tech Mahindra Launches ‘Gift a Career’ Initiative for Upskilling of Youth

-

40% Women Prefer Flexible Working Options in Post-COVID World: Survey

-

3 out of 4 companies believe they can effectively hire employees virtually: Report

-

Vodafone , CGI and NASSCOM Foundation launch digital skills platform

-

Odisha: Bank, postal employees to deliver cash for elderly, differently-abled persons

-

Skill India launches AI-based digital platform for "Skilled Workforce"

-

Hiring activity declines 6.73% in first quarter: Survey

-

70% startups impacted by COVID-19 pandemic

-

Bajaj Allianz Life ropes in Santanu Banerjee as CHRO

-

Over 70 Percent MSMEs look at cutting jobs to sustain businesses

-

93 Per Cent employees stressed about returning to office post-lockdown

-

Johnson & Johnson India announces family benefits for same gender partners

-

Indian firms turning friendly towards working mothers

-

Welspun India names Rajendra Mehta as new CHRO

-

Wipro partners with NASSCOM to launch Future Skills platform

Human Capital is niche media organisation for HR and Corporate. Our aim is to create an outstanding user experience for all our clients, readers, employers and employees through inspiring, industry-leading content pieces in the form of case studies, analysis, expert reports, authored articles and blogs. We cover topics such as talent acquisition, learning and development, diversity and inclusion, leadership, compensation, recruitment and many more.

Subscribe Now

.PNG)

Comment